Below is a screenshot of a major success from the other night. It's a proof-of-concept of a very big idea, and will need some explaining, not only because prototypes are notoriously unfriendly and obscure.

It's a web-page, that's clear enough. You have to use your imagination somewhat and realize that the individual box over on the right was a live camera feed from the small astronomical telescope I now have set up in the back yard. It's a webcam video box.

That input image from the webcam is then being thrown into the computational coffee grinder, in the form of Fast Fourier Transforms being implemented in the core of my 3D card's Texture Engine. You can see the original image in the top-left of the 'wall', and it's FFT frequency-analysis directly below it.

The bar graphs are center slices across the 'landscape' of the frequency image above them, because in the frequency domain we're dealing with complex numbers and negative values, which render badly into pixel intensities when debugging.

Doing a 2D FFT in real-time is pretty neat, but that's not all. The FFT from the current frame is compared against the previous frame (shown next to it, although without animation it's hard to see the difference) through a convolution, which basically means multiplying the frames together in a special way, and taking the inverse fourier transform of what you get.

The result of that process is the small dot in the upper right-hand quadrant box. That dot is a measurement of the relative motion of the star from frame to frame. It's a 'targeting lock'. Actually, it's several useful metrics in one:

- It's the 'correspondence map' of how similar both images are when one is shifted by the co-ordinates of the output pixel. So the center pixel is an indication of how they line up exactly, the pixel to the left is an indication of how well if the second image is shifted one pixel to the left, and so on.

- For frames which are entirely shifted by some amount, the 'lock' pixel will shift by the same amount. It becomes a 'frame velocity measurement'.

- If multiple things in the scene are moving, a 'lock' will appear for the velocity of each object, proportional to it's size in the image.

- If the image is blurred by linear motion, the 'lock' pixel is blurred by the same amount. (The "Point Spread Function" of the blur)

- Images which are entirely featureless do not generate a clear lock.

- Images which contain repeating copies of the same thing will have a 'lock' pixel at the location of each repetition.

This is what happens when you 'convolve' two images in the frequency domain. Special tricks are possible which just can't be done per-pixel in the image. You get 'global phase' information about all the pixels, assuming you can think in those terms about images.

Of course you're wondering, "Sure, but what's the framerate on that thing? It's in a web browser." Well, as I type this, it's running in the window behind at 50fps. Full video rate. My CPU is at 14%.

So now you're thinking, "what kind of monster machine do you have that can perform multiple 256-point 2D Fourier transforms and image convolution in real-time, in a browser?" Well, I have an ATI Radeon 7750 graphics card.

Yup, the little one. The low-power version. Doesn't even have a secondary power connector. Gamers would probably laugh at it.

So the next question is, what crazy special pieces of prototype software am I running to do this? None. It's standard Google Chrome under Windows. I did have to make sure my 3D card drivers were completely up to date, if that makes you feel better. Also, three thousand lines of my code.

Most of this stuff probably wouldn't have worked 3-6 months ago... although WebGL is part of the HTML5 spec, it's one of the last pieces to get properly implemented.

In fact, just two days ago Chrome added a shiny new feature which makes life much easier for people with multiple webcams / video sources. (ie; me!) You can just click on the little camera to switch inputs, rather than digging three levels deep in the config menu. So it's trivial to have different windows attached to different cameras now. Thanks, Google!

The HTML5 spec is still lacking when it comes to multiple camera access, but that's less important now. (And probably "by design", since choosing among cameras might not be something you leave to random web pages...)

So, why?

Astromech, Version 0

This is where I should wax lyrical about the majesty of the night sky, the sweeping arc of our galaxy and it's shrouded core. All we need to do is look up, and hard enough, and infinite wonder is arrayed in every direction, to the limits of our sight.

Those limitations can be pretty severe, though. Diffraction effects, Fresnel Limits, the limits of the human eye, CCD thermal noise, analog losses, and transmission errors. Not to mention clouds, wind, dew, and pigeons.

Professional astronomers spend all their days tuning and maintaining their telescopes. But weather is the great leveler which means that a lucky amateur standing in the right field can have a better night than the pros. In fact, the combined capability of the Amateur Astronomy community is known to be greater, in terms of collecting raw photons, than the relatively few major observatories.

Modern-day amateurs have some incredible hardware, such as high-resolution DSLR digital cameras that can can directly trace their ancestry to the first-gen CCDs developed for astronomical use. And the current generation of folded-optics telescopes are also a shock to those used to meter-long refractors.

Everyone is starting to hit the same limits - the atmosphere itself. Astronomy, and Astrophotography are becoming a matter of digital signal processing.

The real challenge is this: how do we take the combined data coming from all the amateur telescopes and 'synthesize' it into a single high-resolution sky map? Why, we would need some kind of web-based software which could access the local video feed and perform some advanced signal processing on it before sending it on through the internet.... ah.

Perhaps now you can see where I'm going with this.

Imagine Google Sky (the other mode of Google Earth) but where you can _see_ the real-time sky being fed from thousands of automated amateur observatories. (plus your contribution) Imagine the internet, crunching its way through that data to discover dots that weren't there before, or that have moved because they're actually comets, or just exploded as supernovae.

My ideas on how to accomplish this are moving fast, as I catch up the latest AI research, but before the synthesis comes data acquisition.

Perhaps one fuzzy star and one fuzzy targeting lock isn't much, but it's a start. I've made a measurement on a star. Astrometrics has occurred.

|

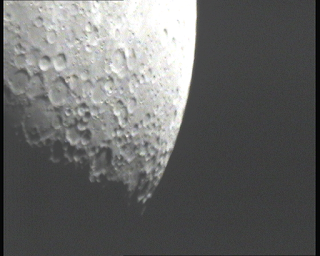

| The Moon, captured with a CCD security camera through a 105mm Maksutov Cassegrain Telescope |

Hopefully before my next shot at clear skies (it was cloudy last night, and today isn't looking any better) I can start pulling some useful metrics out of the convolution. The 'targeting dot' can be analysed to obtain not only the 'frame drift' needed to 'stack' all the frames together (to create high-resolution views of the target) but also to estimate the 'point spread function' needed for deblurring. With a known and accurate PSF, you can reverse the effects of blurring in software.

Yes, there is software that already does this. (RegiStax, Deep Sky Stacket, etc) but they all work in 'off-line' mode which means you have no idea how good your imagery really is until the next day. That's no help when you're in a cold field, looking at a dim battery-saving laptop screen through a car window, trying to figure out if you're still in focus.

Yes, there is software that already does this. (RegiStax, Deep Sky Stacket, etc) but they all work in 'off-line' mode which means you have no idea how good your imagery really is until the next day. That's no help when you're in a cold field, looking at a dim battery-saving laptop screen through a car window, trying to figure out if you're still in focus.

So, a good first job for "Astromech" is to give me some hard numbers on how blurry it thinks the image is.. that's probably today's challenge, although real life beckons with it's paperwork and hassles.

It's fun to be writing software in this kind of 'experimental, explorative' mode, where you're literally not sure if something is possible until you actually try it. I've found myself pulling down a lot of my old Comp Sci. Textbooks with names like "Advanced Image Processing", skipping quickly through to the last chapter, and then being disappointed they end there. In barely a month, I've managed to push myself right up to the leading edge of this topic. I've built software that's almost ready for mass release that's only existed before in research labs. There's every chance it will run on next-gen mobile phones.

This is gonna work.

No comments:

Post a Comment